O.T.T.O.

Operational Technician & Tactical Observer - a persistent AI system that started as SPDRSNS (an AI journal with Gemini, semantic search, and wellness tracking), grew into SPDR (a 3D-printed Raspberry Pi with wake word detection, face recognition, and a privacy engine), evolved through Clawdbot and local multi-model testing on a 3090, and is now a production assistant that lives on your glasses, learns who you are, and never forgets.

The Problem

AI Without Continuity

Every AI conversation starts from zero. No memory of what you told it yesterday, no awareness of your environment, no understanding of your projects or who you are. You become the bridge between sessions - repeating context, re-explaining preferences, rebuilding trust every single time.

Screens as Bottlenecks

The phone is a powerful tool trapped behind a glass rectangle. Every interaction demands your full attention - stop what you're doing, pull out a device, type, wait, read. For AI to truly augment your capabilities, it needs to exist in your peripheral, not demand you stop living to use it.

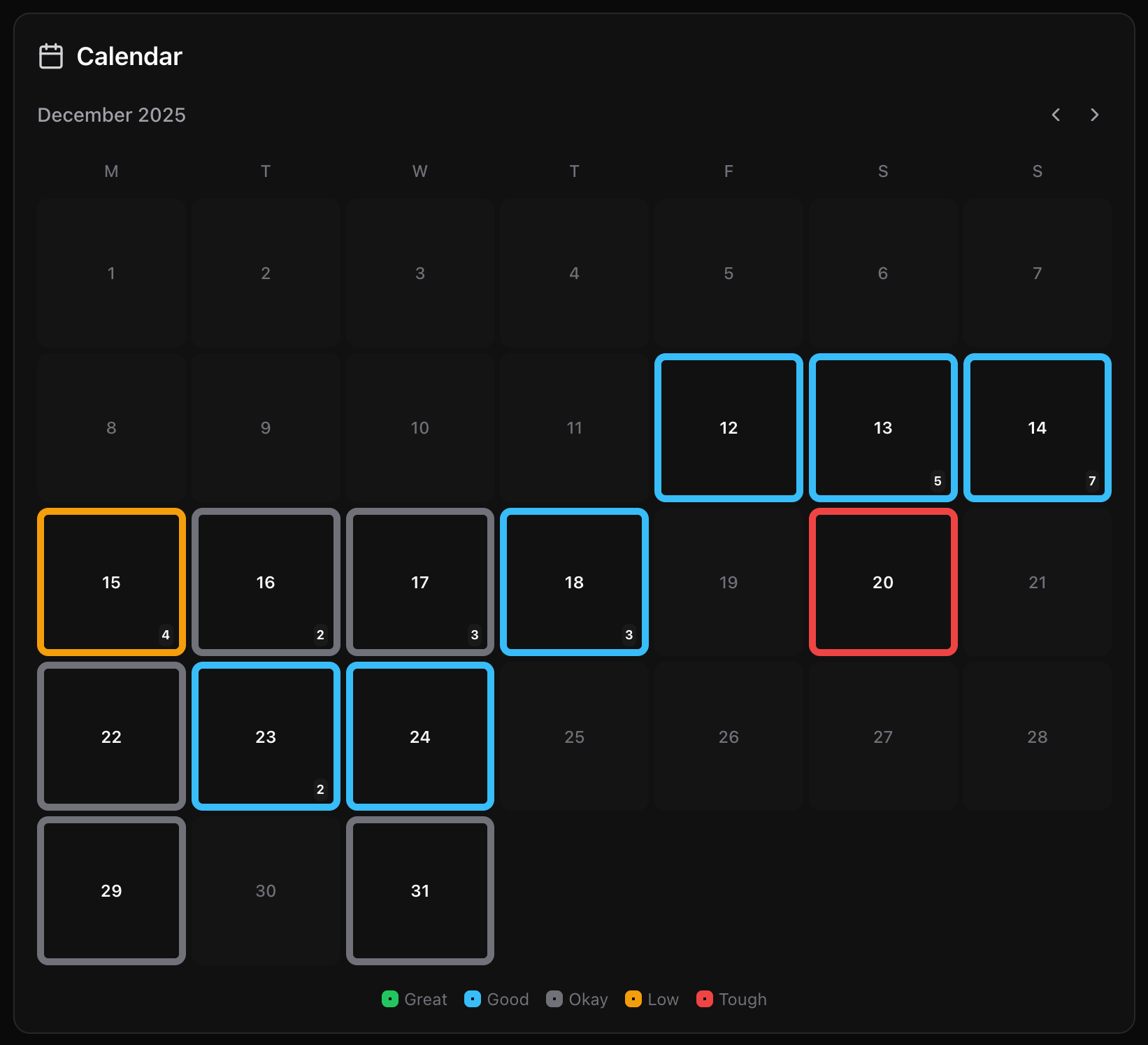

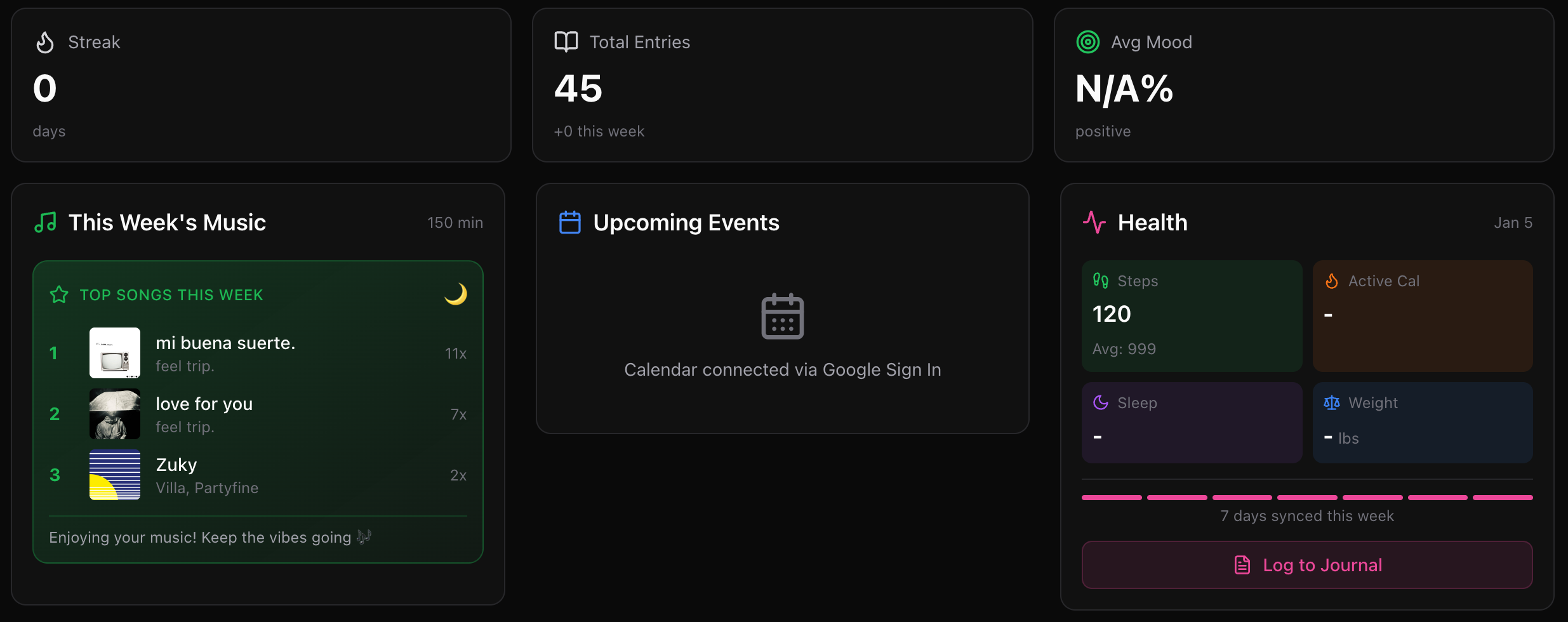

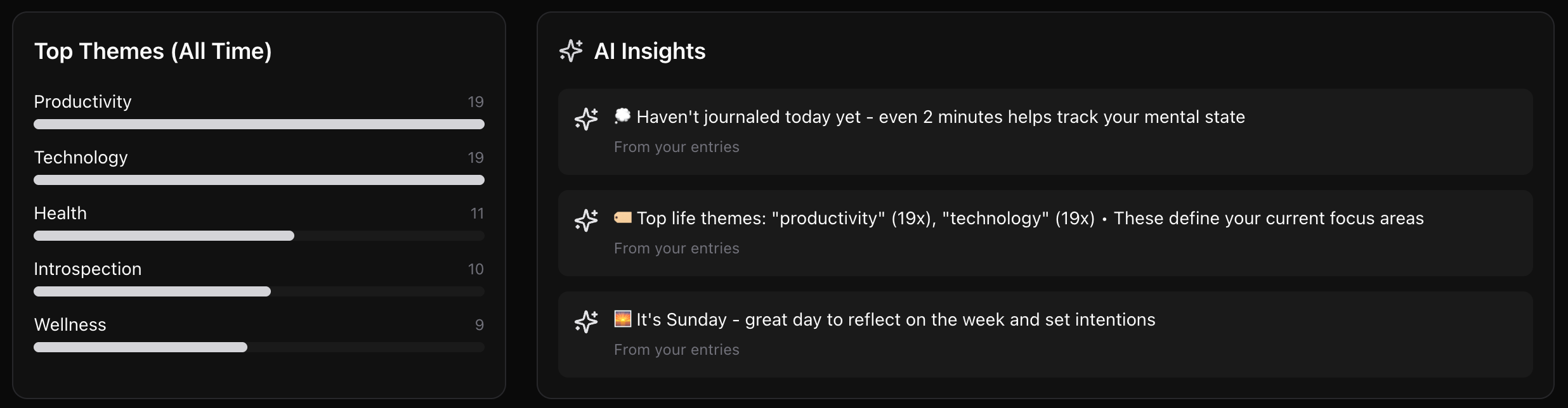

SPDRSNS - The Web Brain

Building a Portable Intelligence

It started as SPDRSNS (SPDR Sense) - a full-stack AI journal and personal intelligence platform built on Next.js 15 and Gemini 2.0 Flash. Not a chatbot. A system that ingested journal entries, wellness data, Spotify listening history, Google Calendar events, and health metrics - then used AI to find patterns across all of it. Mood trends, sleep correlations, music-to-emotion mapping. Everything stored in Supabase with pgvector embeddings for semantic search.

The core idea: build an AI foundation that genuinely knows you - your habits, your health, your work patterns, your music taste, your goals - and own that data entirely. No third-party model training on your journal entries. No corporate AI holding your personal context hostage. Your intelligence layer, your database, portable across whatever body you give it next.

That portability became the entire thesis. The same Supabase brain that powered the web dashboard would later connect to a Raspberry Pi with a camera and microphone, then to smart glasses on your face. The body changes. The brain persists. SPDRSNS was the foundation that made that possible.

What Was Built

- 01 AI journal with Gemini analysis — mood, themes, observations per entry

- 02 Semantic search via pgvector embeddings across all entries

- 03 Spotify, Google Calendar, and wellness data integration

- 04 Knowledge base with auto-extraction and spaced repetition

- 05 Goal tracking with AI-powered progress analysis

- 06 Multi-model fallback — Gemini primary, Claude and GPT as backup

Data Privacy

Every byte lives in a personal Supabase instance - not OpenAI's servers, not Google's cloud. Journal entries, health data, conversation history, knowledge graphs - all self-hosted. The AI reads from your database, not the other way around. When the body changes (Pi, glasses, desktop), the data stays yours and travels with you.

Stack

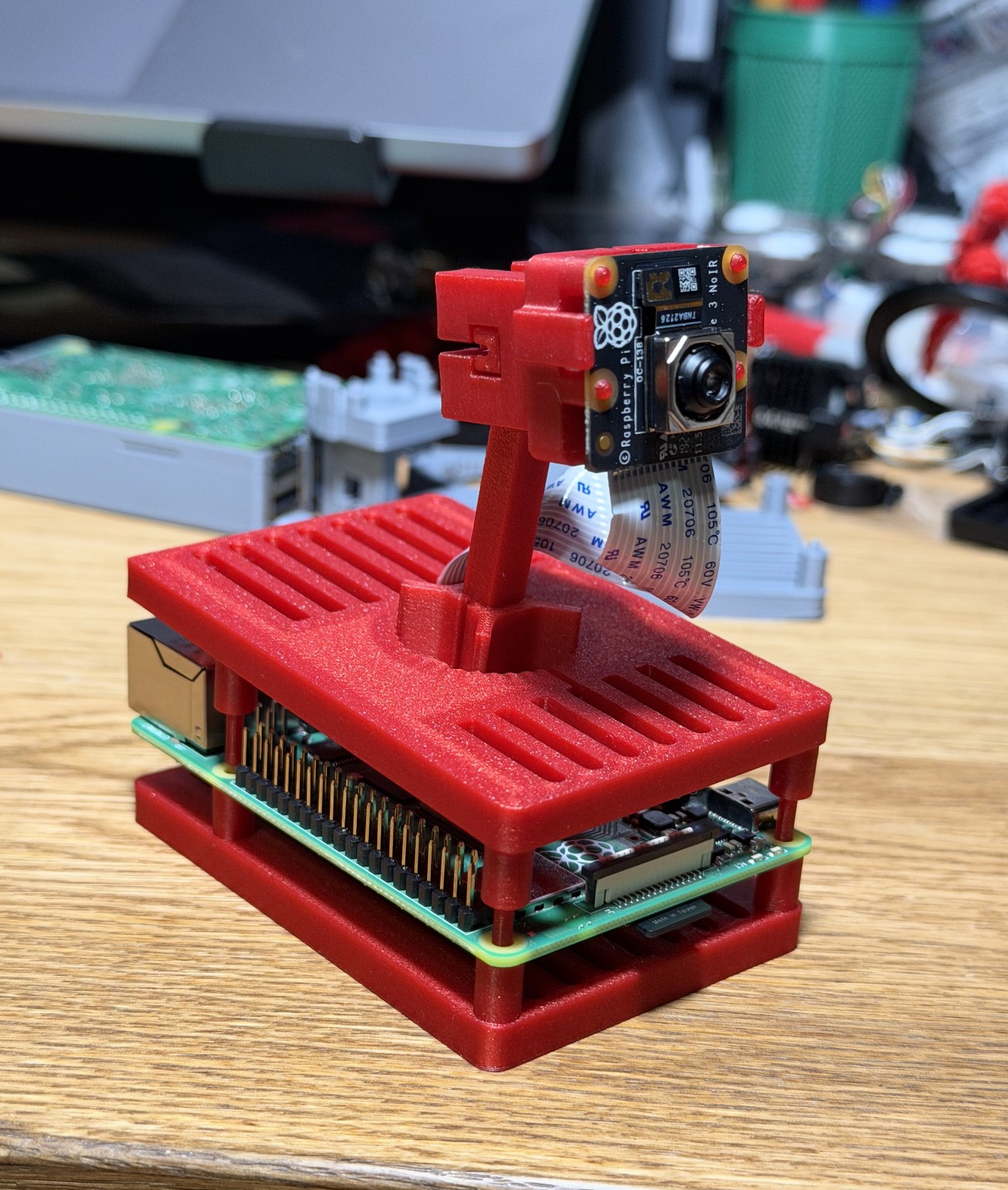

SPDR - Giving It a Body

V1: Wired

SPDRSNS proved the concept. Now it needed a physical presence. SPDR was a Raspberry Pi 5 running 2,200+ lines of Python - wake word detection via Porcupine (“Spider”, later “OTTO”), Whisper for speech-to-text, pyttsx3 for voice output, and a Pi camera for real-time vision. 3D printed a custom enclosure (OpenSCAD) to house everything. Connected to SPDRSNS as its cloud brain, syncing observations and conversations back to Supabase.

It could identify people through face recognition, voice embeddings, body silhouettes, and even clothing color. A full privacy engine with master/public/cautious/locked modes controlled what it shared based on who was in the room. It auto-detected tasks from overheard speech, asked curious questions when idle, and streamed a live camera feed to the web dashboard. Not a toy - a working AI companion. But it was tethered to the wall.

V2: Wireless

Cut the cord with external batteries. Same brain, same body, now mobile. No longer anchored to a power outlet, the Pi unit became portable - a self-contained AI assistant that could go wherever you went. Still chunky, still a dedicated box, but now untethered and proving that mobility mattered.

Hardware

- → Raspberry Pi 5 + Pi Camera

- → USB mic + speaker + LED ring

- → 3D printed enclosure (OpenSCAD)

- → External battery pack (V2)

Software

- → Porcupine wake word (“Spider”)

- → Whisper STT + pyttsx3 TTS

- → Face + voice + body recognition

- → Privacy engine (4 security modes)

- → Ambient learning + task detection

Clawdbot - Computer Use & Local Models

First Week Adopter

When Claude Computer Use launched as Clawdbot, I was on it the first week. Set it up on a spare MacBook, fully sandboxed - because I understood the security risks of giving an AI full computer access. Built out dedicated accounts and email addresses specifically for Clawdbot to use, so it could operate independently without touching personal credentials.

Working and testing with Clawdbot became a constant feedback loop. New updates from Anthropic meant new capabilities to explore. Every session was both using the tool and studying it - understanding what worked, what broke, and what could be built on top of it.

Multi-Model, Local-First

Clawdbot was powered by multiple models - Opus 4.5, Sonnet, Kimi K2.5, Qwen 3, and more. But the real breakthrough was running them all local on my PC with an NVIDIA RTX 3090. No cloud dependency for inference. Full privacy. Full ownership of the data flowing through the system.

This phase was about testing the boundaries - privacy, ownership, security, and building out my own system architecture. What does it mean to have AI that runs entirely on hardware you own? What changes when the model weights live on your machine? These weren't theoretical questions anymore.

Models Running Local

All inference on NVIDIA RTX 3090 - zero cloud dependency

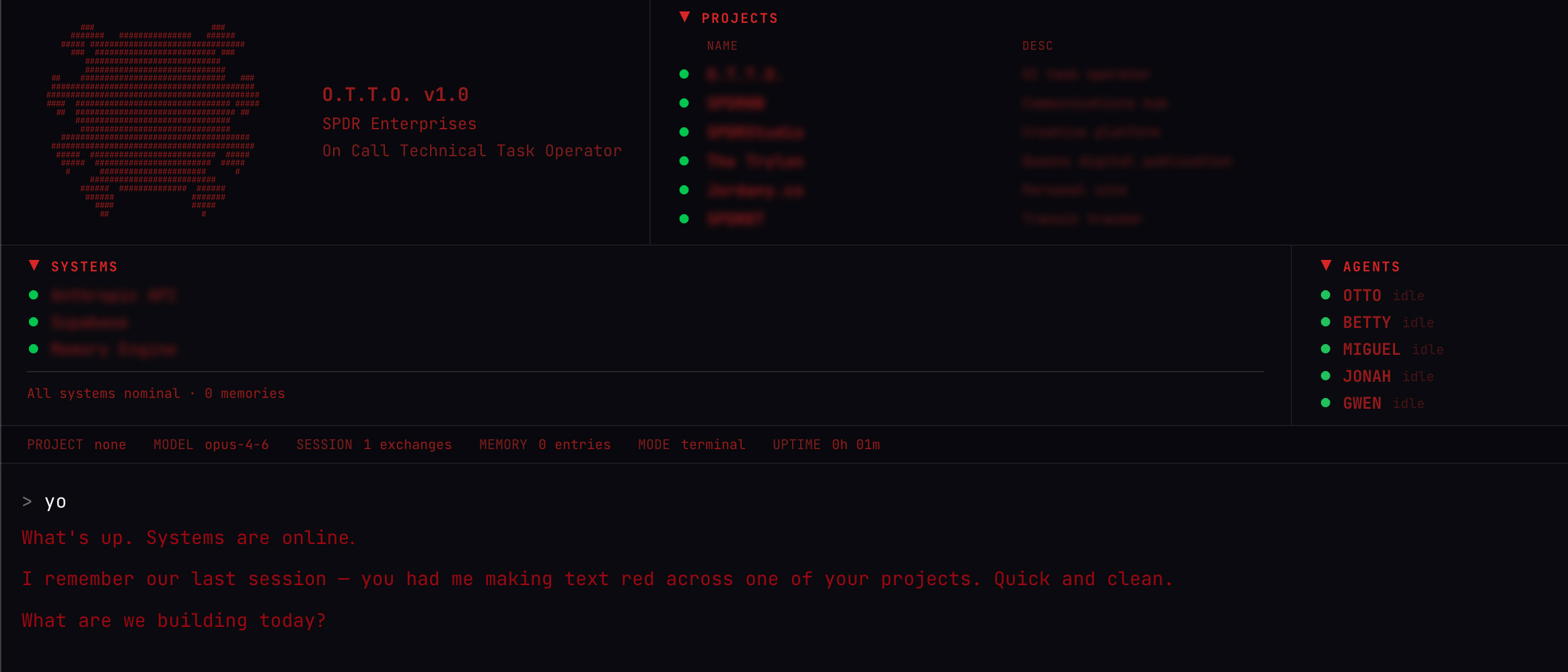

OTTO - The Best of Everything

All Learnings. One System.

After extensive testing on sandboxed builds, OTTO V4 was built on my personal PC - powered by Claude, combining every lesson from every previous version. The web app's persistent memory. The Pi's physical presence. Clawdbot's multi-model intelligence and local inference capabilities. This is the culmination.

OTTO is persistent across sessions. It builds its own memory and database over time. It learns about me - what I do, who I am, how I work, what I care about. Every interaction makes it smarter, more contextual, more useful. It doesn't start from zero. It picks up where we left off.

The best of all projects. Continues to evolve.

Persistent Memory

Supabase-backed long-term storage - facts, visual observations, work logs, meeting transcripts, user preferences. Tell OTTO something once. It remembers across every session. The database grows as the relationship deepens.

Multi-Model Intelligence

Gemini for vision. GPT-4o for conversation. Claude for reasoning. Llama for speed. Switch models on the fly via voice command. Each model selected for its strength on the task at hand.

SPDR Agent Network

OTTO doesn't work alone. Connected to the SPDR agent network, it delegates research, code tasks, and scheduling to specialized agents. A distributed intelligence system, accessible through a single voice.

Core Capabilities

Voice-First Interaction

Natural conversation with persistent context. Wake word activation, concise spoken responses, conversation history that carries across sessions. Talk to it like a person.

Spatial Vision

Camera integration for real-time scene understanding. Photo capture, OCR for reading signs and menus, background visual memory. GPS-tagged observations stored to Supabase.

Live Translation

Point-and-read OCR with instant translation across 20+ languages. Japanese menus, Korean street signs, French documents - all through a voice command and a glance.

Persistent Memory

Facts, visual observations, work logs, meeting transcripts - stored across sessions. Tell OTTO your wifi password once. It remembers forever. Ask about something from weeks ago - it recalls.

Local + Cloud Inference

Tested and proven on local hardware (3090) and cloud APIs. Privacy when you need it, power when you want it. Full control over where your data flows.

Agent Delegation

Connected to the SPDR agent network. Delegate research, code generation, scheduling to specialized agents while staying focused on the physical world.

Evolution

SPDRSNS

FoundationFull-stack AI journal and personal intelligence platform. Gemini-powered analysis of entries, wellness data, Spotify, and calendar events. Semantic search via pgvector, auto-extracted knowledge base, goal tracking, and multi-model fallback. The cloud brain that everything else connected to.

SPDR - Wired

RetiredGave the AI a body. RPi 5 running 2,200+ lines of Python — 'Spider' wake word via Porcupine, Whisper STT, Pi camera, face and voice recognition, privacy engine with 4 security modes, ambient learning from overheard speech. 3D printed enclosure. Connected to SPDRSNS as its cloud brain. Wired to the wall, but alive.

SPDR - Wireless

RetiredCut the cord with external batteries. Same brain, same body, now mobile. No longer anchored to a desk. Proved that an always-on AI assistant needs to move with you.

Clawdbot

EvolvedFirst week adopter of Claude Computer Use. Sandboxed on a spare MacBook with dedicated accounts. Multi-model testing with Opus 4.5, Sonnet, Kimi K2.5, Qwen 3 - all running local on a 3090. Deep exploration of privacy, ownership, and security.

O.T.T.O.

ActiveThe best of all projects. Built on personal PC after extensive sandboxed testing. Powered by Claude. Persistent across sessions, building its own memory and database. Learns who you are. Connected to SPDR agent network. Lives on glasses.

What's Next

Whole-Home Network

Standalone OTTOs throughout the house, replacing traditional smart assistants. Every room has a persistent, context-aware AI that knows who you are and what you're working on. Not a speaker that answers trivia - an intelligence that augments your life.

Always-On Glasses

OTTO already lives on Even Realities G2s and Mentra Live. The glasses give it visual and spatial input - it sees what you see, goes where you go. The AI moves with you, not the other way around.

Virtual Body in AR

Next step: give OTTO a virtual body through Snap Spectacles AR. Not just a voice in your ear - a presence in your space. More features, more modalities, more ways to interact with an AI that truly knows you.